| File | Purpose |

|---|---|

tda_cd_benchmark.ipynb |

Benchmarking on class-distribution shifts |

tda_runner_experiments.py |

Hyperparameter tuning and sensitivity analysis |

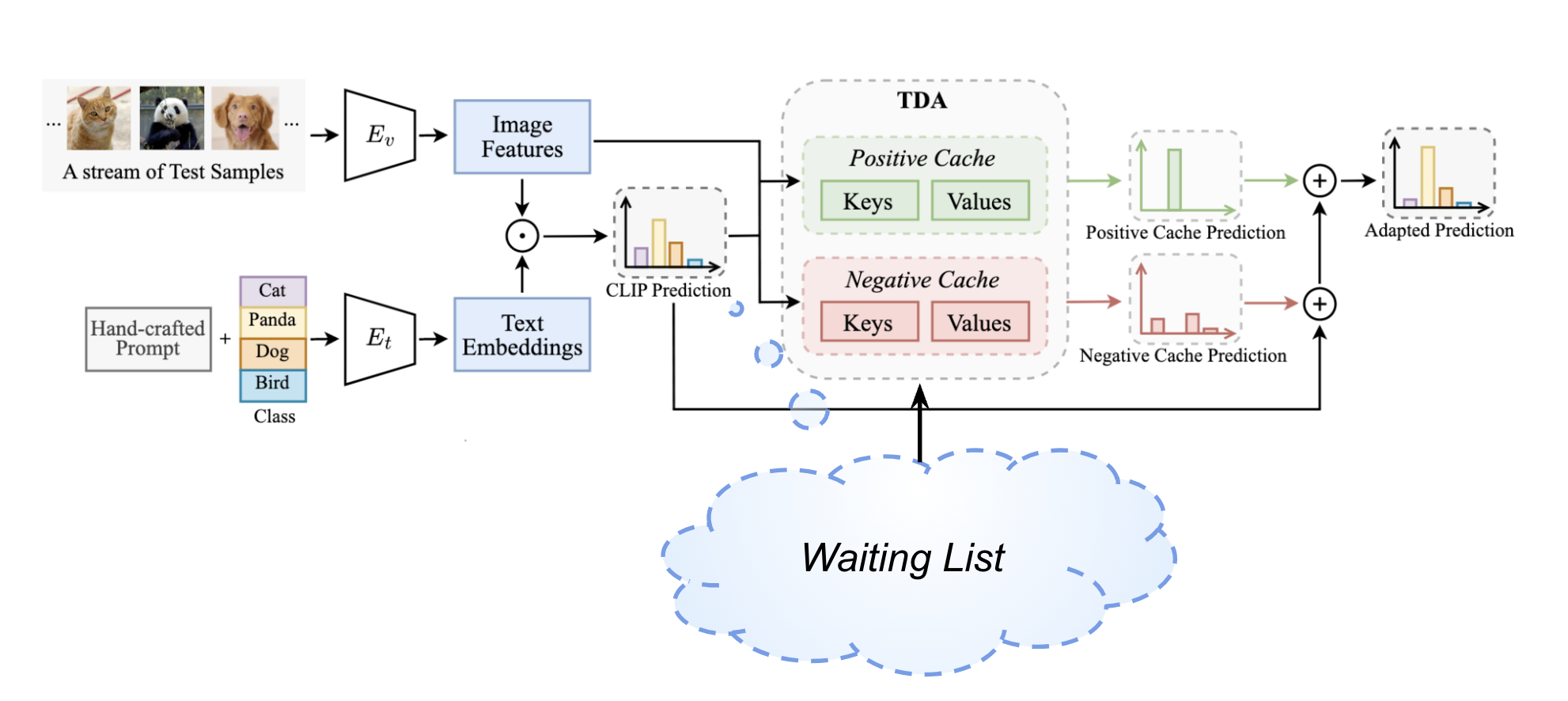

tda_runner_with_waiting.py |

Waiting list enhancement implementation |

Waiting List Strategy: A novel enhancement approach for improved robustness in test-time adaptation