Anomaly Detection on Grape Images Using CNNs

Bachelor's thesis project @ UniRoma1

🍇 Project Overview

This project is part of the European Canopies initiative, aimed at enhancing human-robot collaboration in precision agriculture.

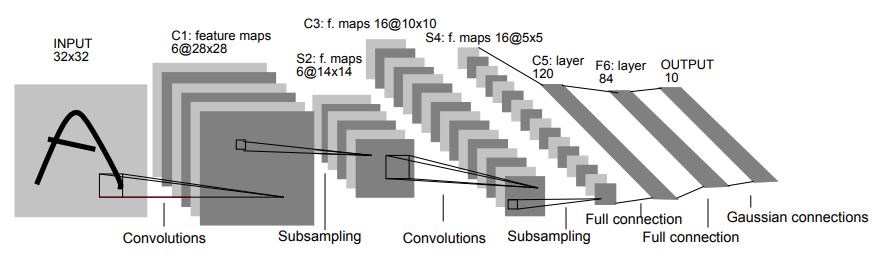

The goal is to automatically detect anomalies in grape berries using Convolutional Neural Networks (CNNs), particularly a LeNet-based architecture, and compare its performance with a benchmark study.

📘 Summary

- Problem: Automatically distinguish between healthy and damaged grapes to improve crop quality and plant health monitoring.

- Solution: A CNN trained on a custom dataset of grape images, optimized via hyperparameter tuning and evaluated against a reference paper.

- Tools: Python, PyTorch, Google Colab, Matplotlib, Seaborn.

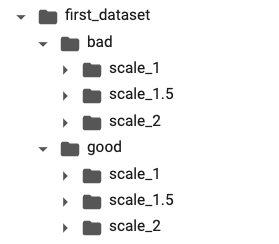

🗂️ Dataset

The dataset is composed of RGB patches at multiple zoom levels (focus on 1.5x), each containing a single grape.

Organized into:

-

good/(healthy grapes) -

bad/(damaged grapes)

To handle dataset imbalance (1:4 bad to good), image augmentation was applied via 90°, 180°, and 270° rotations.

🧠 State of the Art

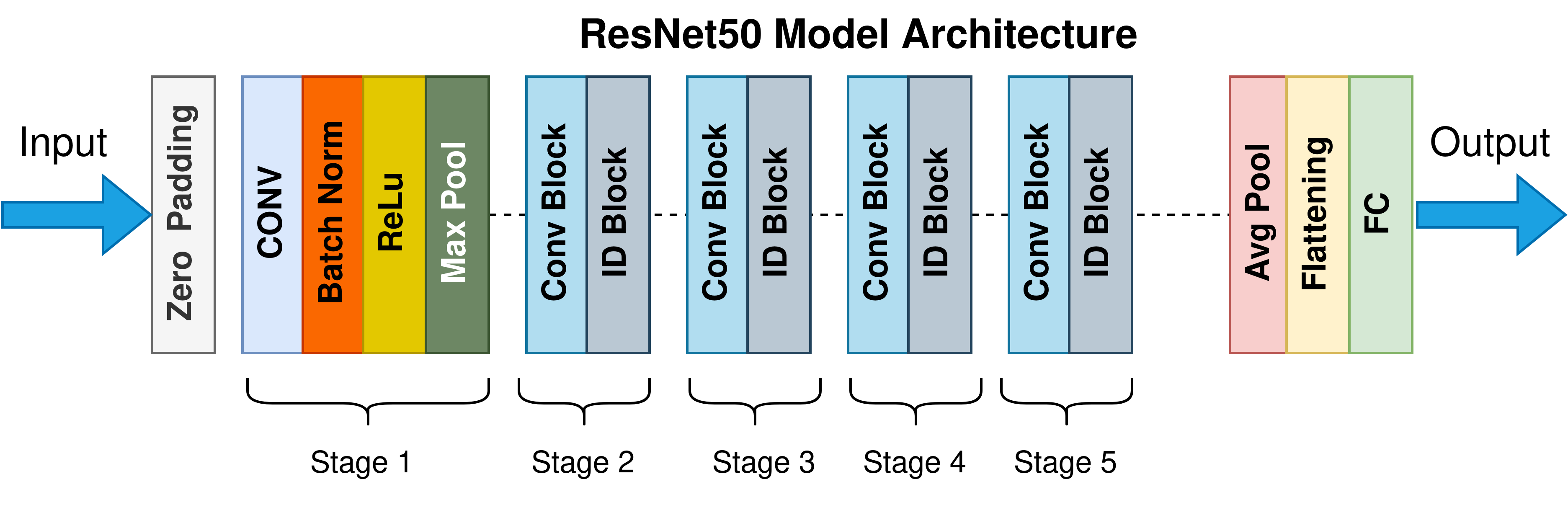

The benchmark paper used a LeNet CNN and compared it with a deeper ResNet50 (pretrained and from scratch).

Despite the complexity of ResNet, LeNet showed greater stability and accuracy in the specific binary classification task.

Performance from the paper:

- Training accuracy: 96.4%

- Validation accuracy: 97%

- Precision: 96.26%

- Recall: 93.34%

🛠️ Implementation & Optimization

- Weight Initialization: Xavier (Glorot)

- Loss Function: BCEWithLogitsLoss

- Optimizers Compared: SGD vs Adam → Chosen: SGD

- Hyperparameter tuning: Grid Search

- Learning rate:

0.001 - Momentum:

0 - Weight decay:

0.001

- Learning rate:

Model saving via torch.save() was used to handle Colab time constraints.

🔍 Results

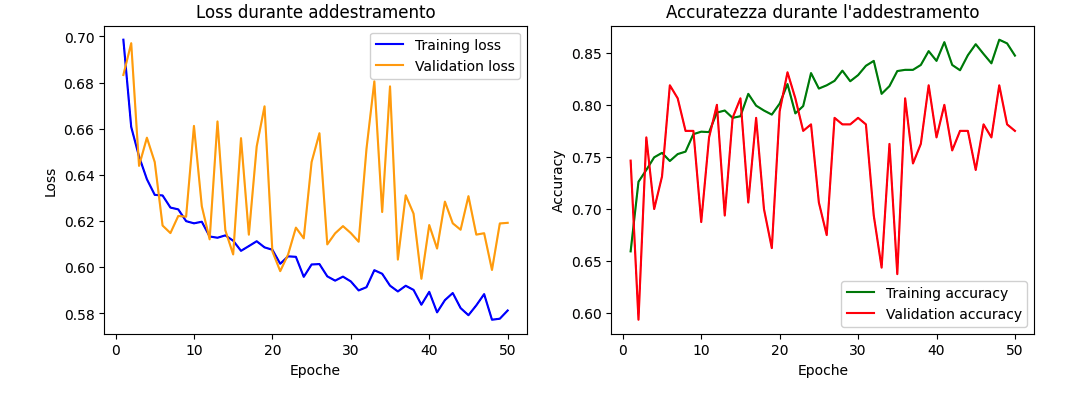

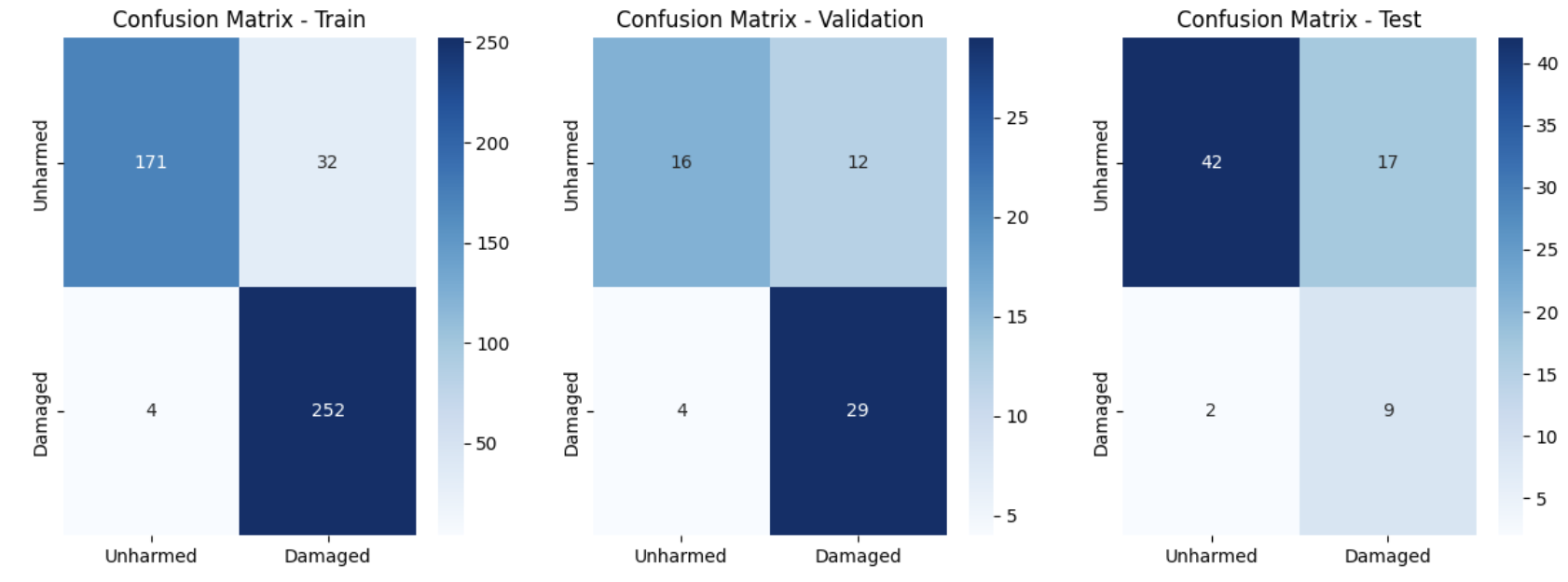

- Validation Accuracy: ~80%

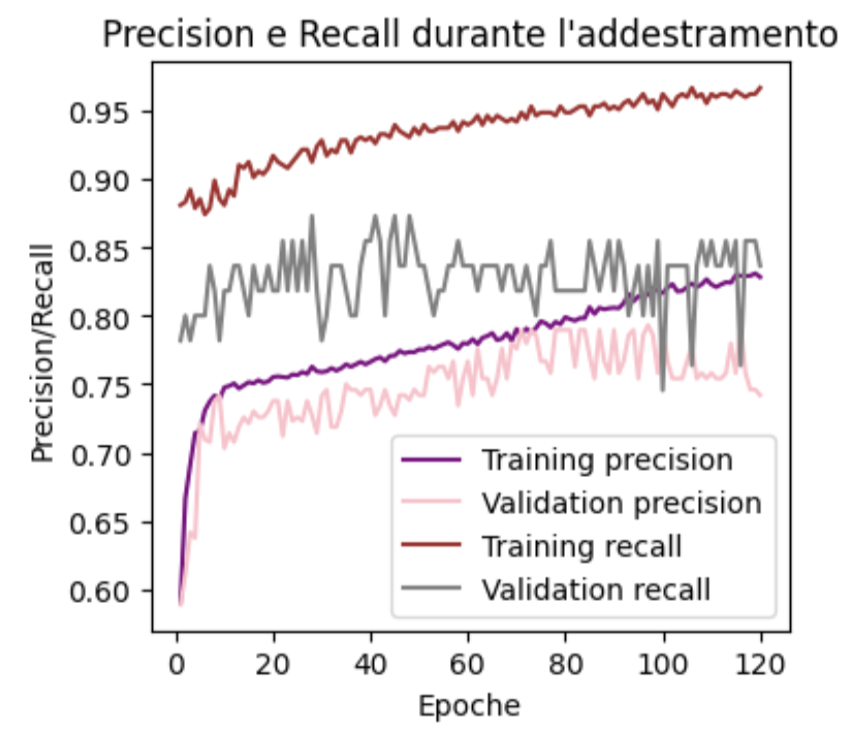

- Precision: 75%

- Recall: 85%

- Training stopped at epoch 70 to prevent overfitting.

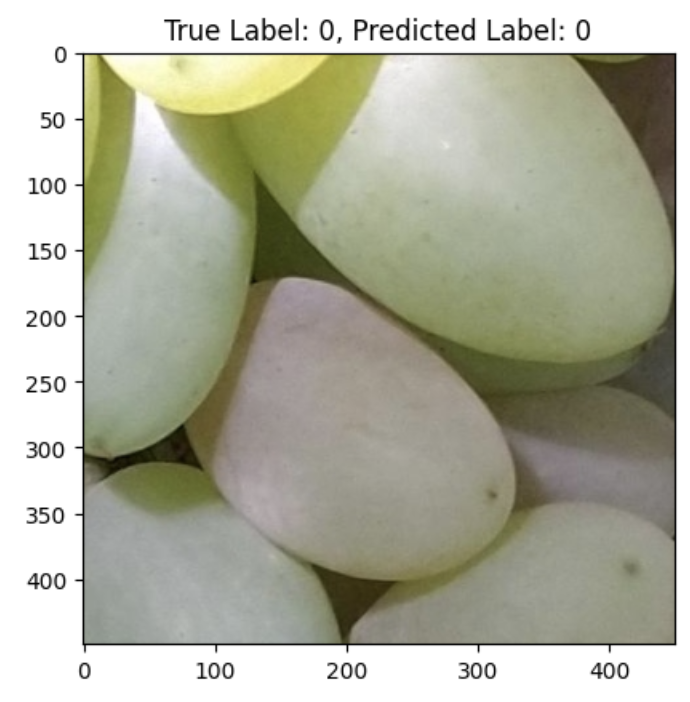

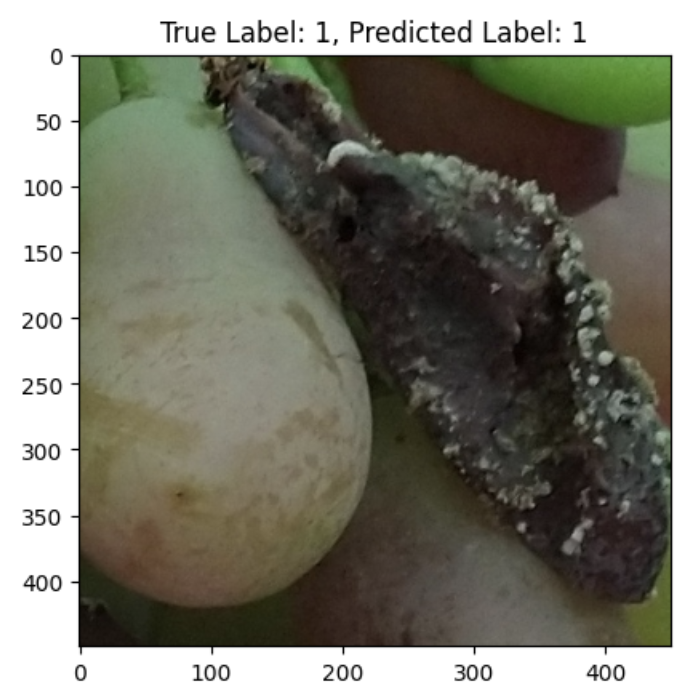

Strengths:

- Effective in detecting damaged berries with darker hues and rough textures.

Weaknesses:

- False positives: Blurry or shiny healthy grapes misclassified as damaged.

- False negatives: Subtly damaged grapes within healthy clusters.

🧾 Conclusions

While the model performed reasonably well, it did not match the reference paper due to dataset quality issues:

- Mixed-class patches (both healthy and damaged berries)

- Lack of precise centering, unlike the benchmark dataset

📌 Main insight: Better image acquisition and labeling are crucial for achieving state-of-the-art results.

📄 Download Report

You can download the full project report here.