Maestro: Conduct Music with Your Hands!

Course project for "Multisensory Interaction Systems" @ UniTN

🎶 Maestro: testing haptics for primary feedback in a music-making multisensory interactive system

Maestro is an experimental project exploring a futuristic way to make music — no piano, no guitar… just your hands, a smart glove 🧤, a baton 🪄, and a bit of magic ✨ (well, tech magic).

Inspired by orchestra conductors, Maestro lets users control musical loops through gestures and receive haptic feedback — gentle vibrations that make the experience more tactile and intuitive. The system is designed for anyone, even if you’ve never played an instrument before. All you need is rhythm and curiosity!

🎥 Demo Video and Report

🛠️ The System Components

The system is made up of:

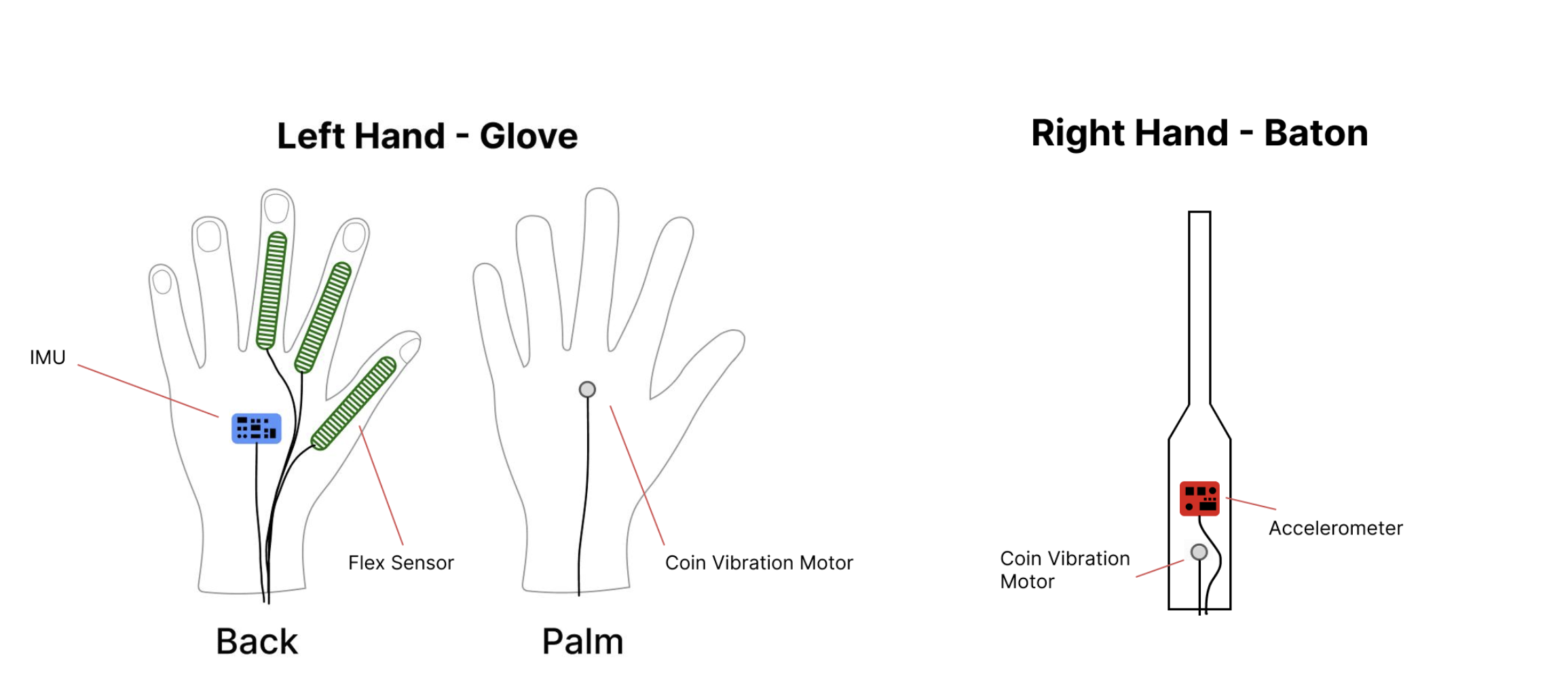

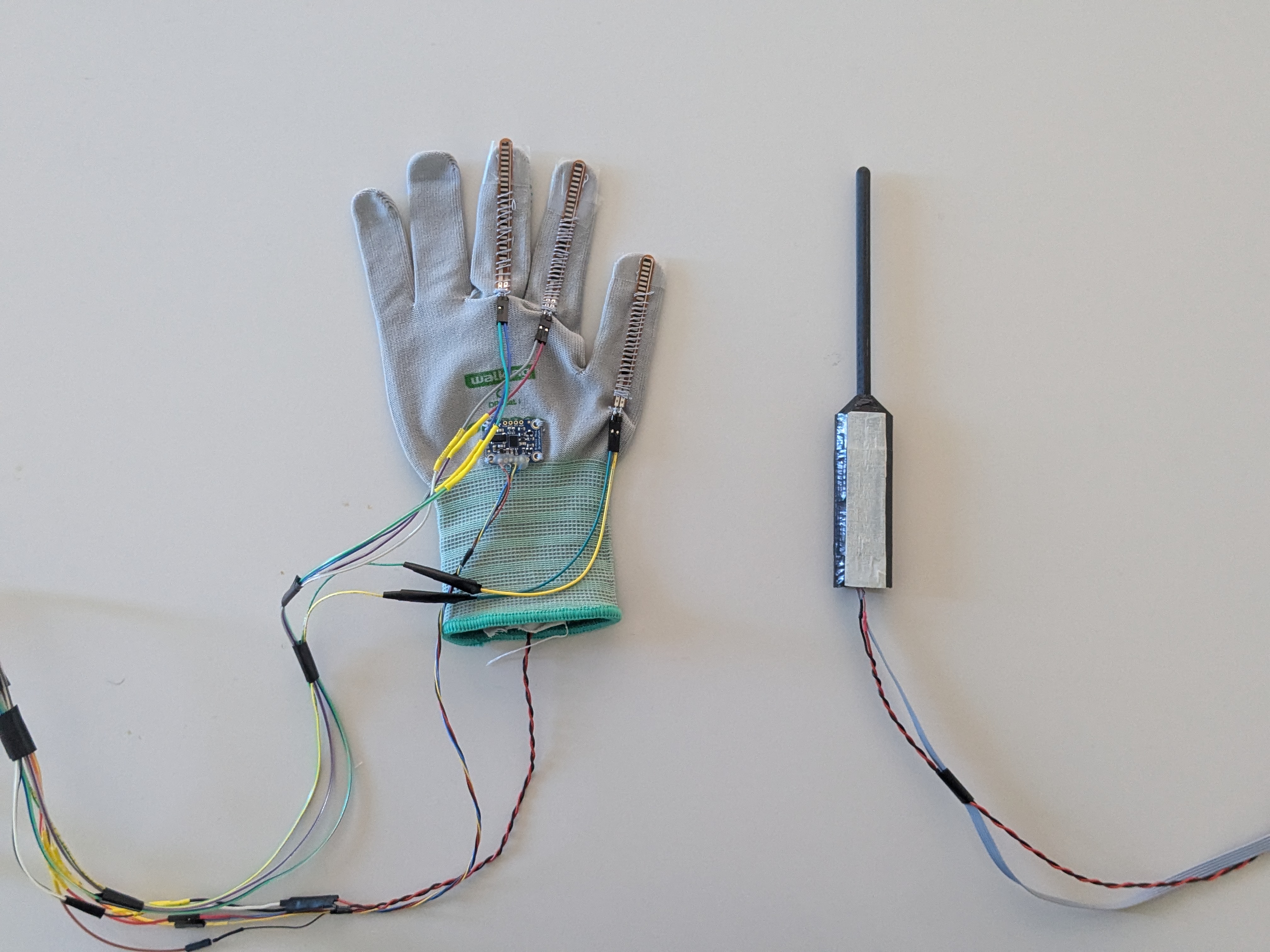

- A glove with flex sensors, an IMU, and a vibration motor (check hardware/glove/)

- A baton with an accelerometer for beat tracking (hardware/baton/)

- A Teensy microcontroller to gather and transmit data (firmware/teensy/maestro.ino)

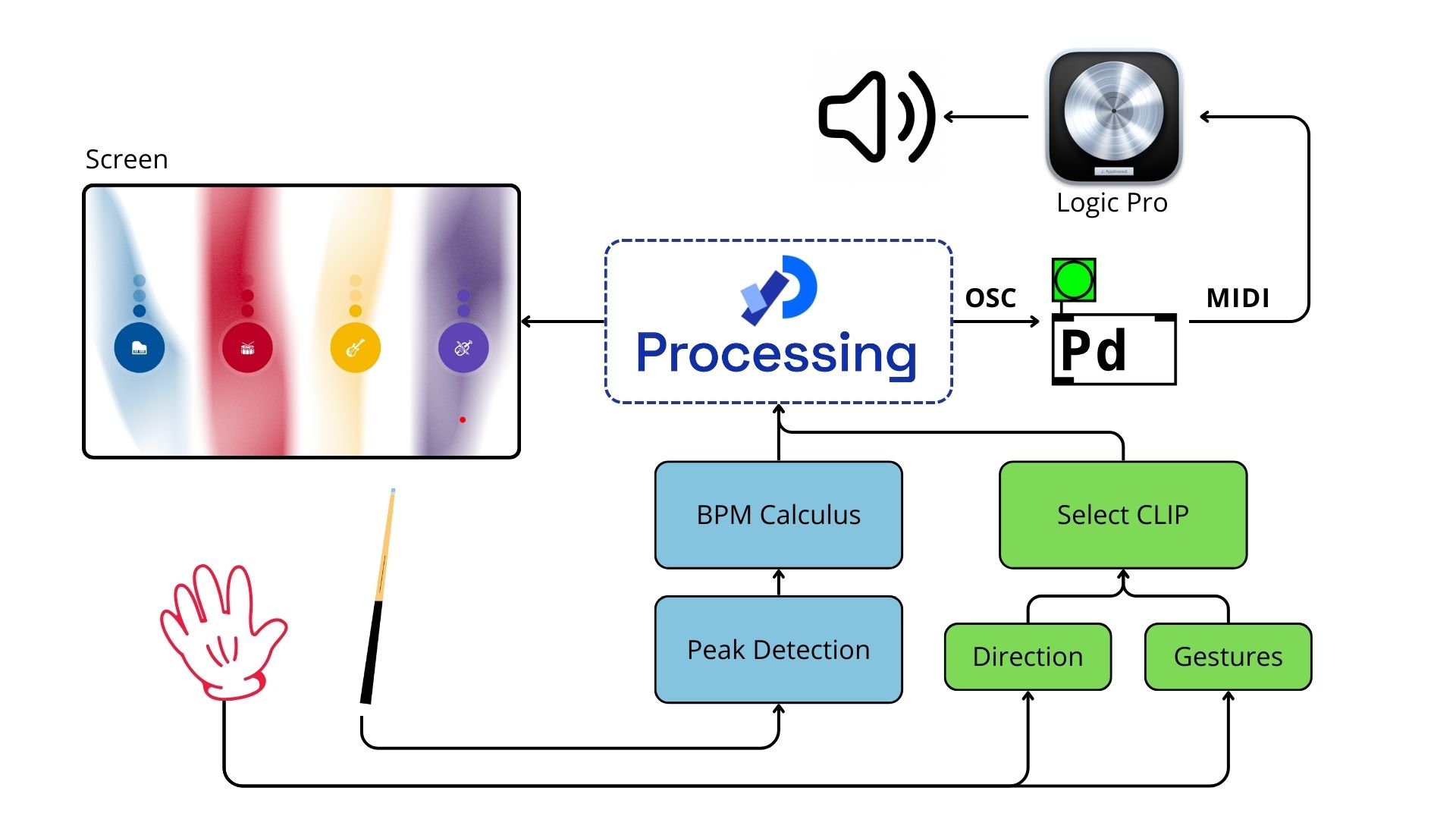

- A Processing sketch to display the visual interface (software/processing/MaestroGUI.pde)

- A Pure Data patch for triggering and controlling audio clips (software/puredata/maestro.pd)

🎯 Project Goal

The goal? See if adding haptics (vibrations) improves the playability and overall feel of this kind of “air instrument”.

💡 How It Works

When you wear the glove and pick up the baton:

- Your left hand (the glove) selects instruments and adjusts their intensity

- Your right hand (the baton) keeps the beat — just like a real conductor!

- Feedback vibrations give you a tactile “yes!” when you select something or hit the beat

A projected interface helps guide your movements, with four musical tracks (like drums 🥁, bass 🎸, synth 🎹, etc.) and three levels of complexity.

🔬 Tested with Real People!

We ran tests with 18 participants — musicians and total beginners alike. They played with and without haptic feedback, and we compared:

- How well they kept tempo 🎵

- How easily they navigated the interface 🧭

- How much fun they had 😄

Results? The glove was a hit with haptics — people found it easier to use and more satisfying. The baton still has room to grow (timing control was tricky), but overall, the feedback made the experience feel more immersive.

Check out the results in the report.

🔍 Behind the Scenes

The glove recognizes gestures like:

- 👆 Pointing

- ✋ Open hand

- ✊ Fist (to lock in choices)

The baton detects beat motion and vibrates to confirm a successful hit. All data is processed in real time through Teensy, sent to Processing for visuals, and translated into audio commands in Pure Data. It even talks to a DAW using MIDI.

📈 What We Learned

- ✅ Haptics can really help in “empty-hand” musical interfaces

- 🎯 The glove’s usability got a big boost with vibrations

- ⚠️ The baton needs improvements in timing detection and user control

- 🧠 Gesture recognition can be refined with better flexibility and feedback

Want more? Read the full discussion and future directions in the report.